Leveraging AI to efficiently test AI chips

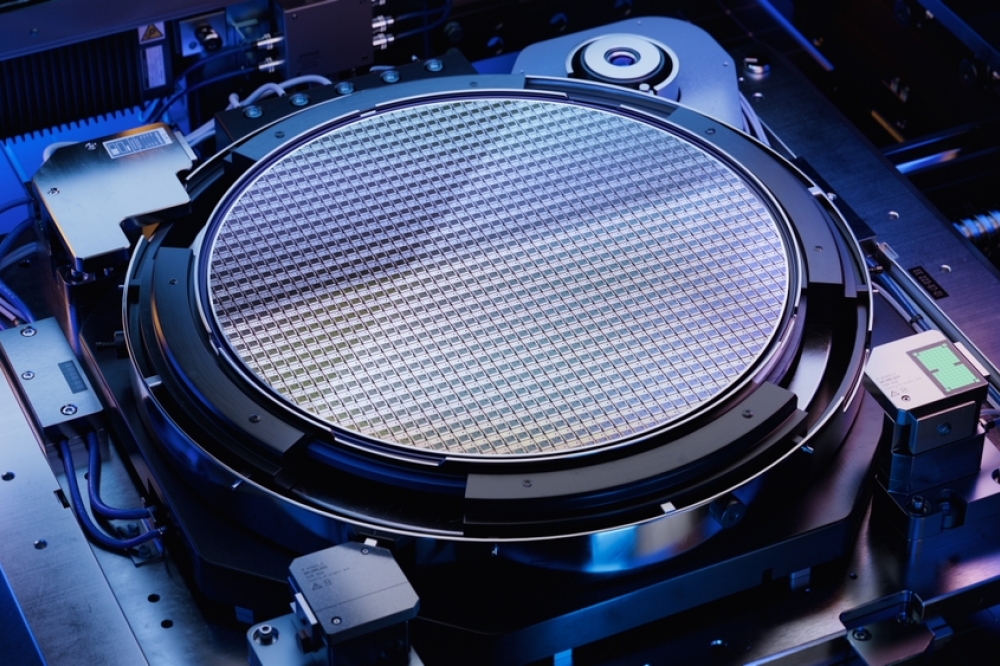

The semiconductor devices that bring artificial intelligence (AI)

and machine learning (ML) to the cloud as well as to the edge present

significant test challenges. The semiconductor test industry is well

positioned to address these challenges by leveraging AI and ML

techniques to analyze and correlate data across the entire semiconductor

value chain.

BY IRA LEVENTHAL, VICE PRESIDENT, APPLIED RESEARCH & TECHNOLOGY, ADVANTEST AMERICA, INC.

EMERGING ARTIFICIAL-INTELLIGENCE (AI) and machine-learning (ML) applications are driving a revolution in the semiconductor industry. Traditional central processing units (CPUs) have yielded ground to graphical-processing units (GPUs) to handle the massively parallel computations common in AI and ML programming, and the GPUs themselves are facing competition from a new generation of AI processors with architectures that are optimized for AI and ML inferencing and training. Many of these AI processors are being developed by new entrants into the semiconductor space such as the hyperscaler companies and startups.

The semiconductor test industry is playing a critically important role in helping its customers solve the problems brought on by the move to dedicated AI processors and other high-performance compute devices. The test industry is also addressing the accompanying challenges of shrinking process nodes, the proliferation of heterogeneous-integration (HI) packaging, the deployment of advanced communications architectures, and chipmakers pushing the limits of power consumption. Initiatives include real-time adaptive testing, predictive maintenance, DC parametric test improvements, in situ thermal test and optimization, and efficient workflow balancing. The key to addressing the challenges is to leverage AI and ML in the production and test processes. Advantest is deploying multiple capabilities to enable AI device test, including advances in hardware, interfacing, handling, thermal management, and data analytics, including AI and ML.

Pre- and post-chiplet worlds

The traditional CPUs of the pre-chiplet world can be described as “purposeful,” or focused on specific well-understood applications. In contrast, the new chiplet-based AI devices may ultimately serve use cases that had yet to be identified when the devices were designed. When a new application arises, the devices cannot be redesigned or even retested in a cost-effective manner. What is needed is a test strategy that generates sufficient data to map these devices to new applications after test is completed.

With traditional test in the pre-chiplet world, the device under test (DUT) consists of a package containing one or a few devices. In such applications, the tester performance must stay ahead of the DUT. Binning is performance-based, requiring that tester resources be faster and more accurate than the DUT. In this world, the tester typically has direct access to most DUT pins, and each test insertion – from post-silicon validation (PSV) to system-level test (SLT) – has well-defined purposes, including the collection of test data. However, the data has typically been stored without context, and has been siloed and shared only as required to address major issues such as significant yield loss.

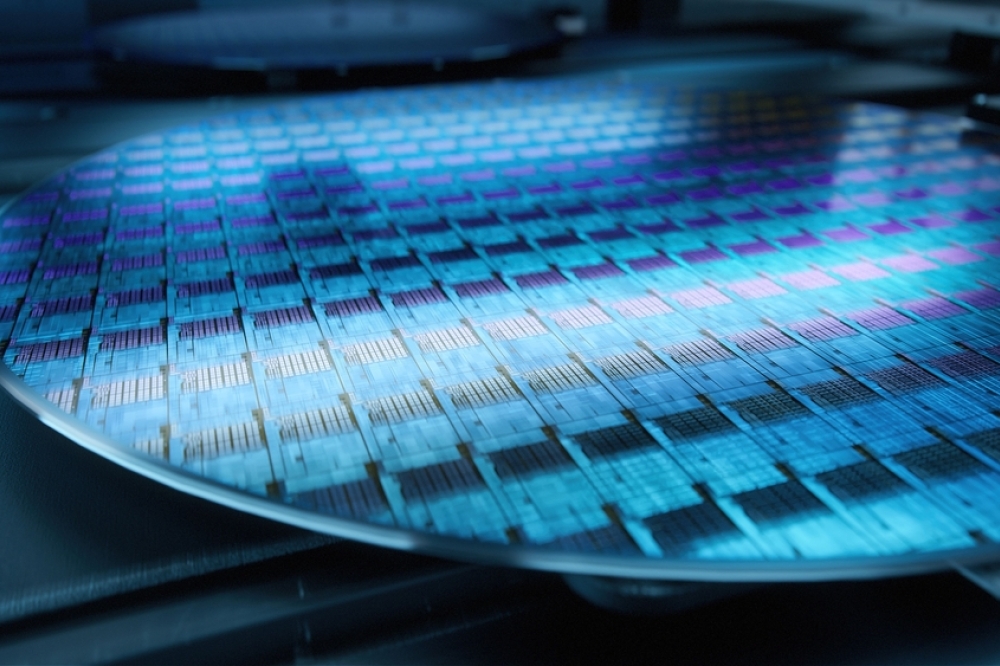

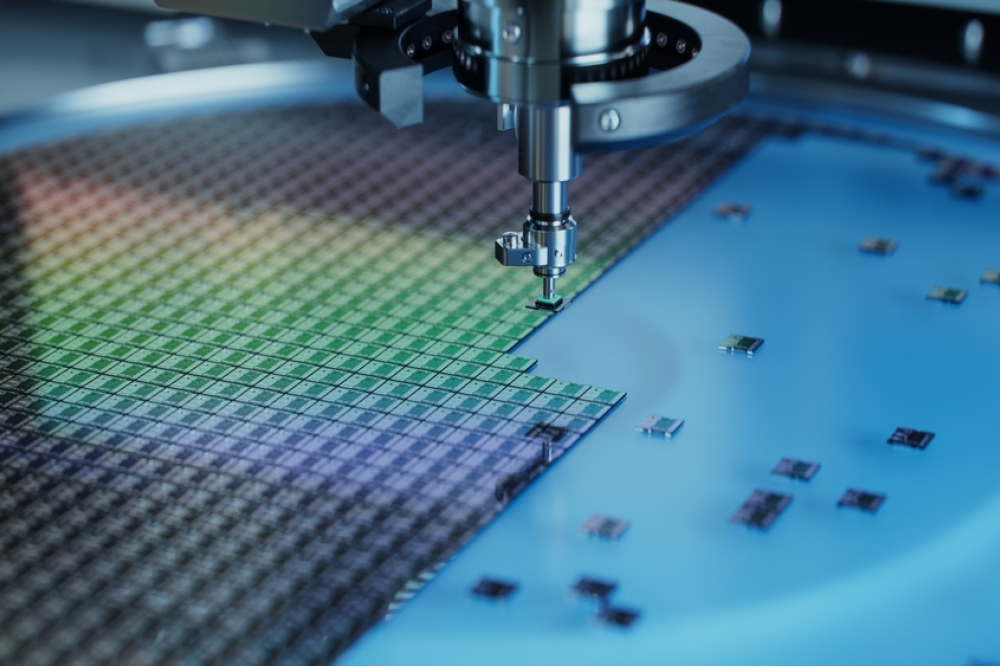

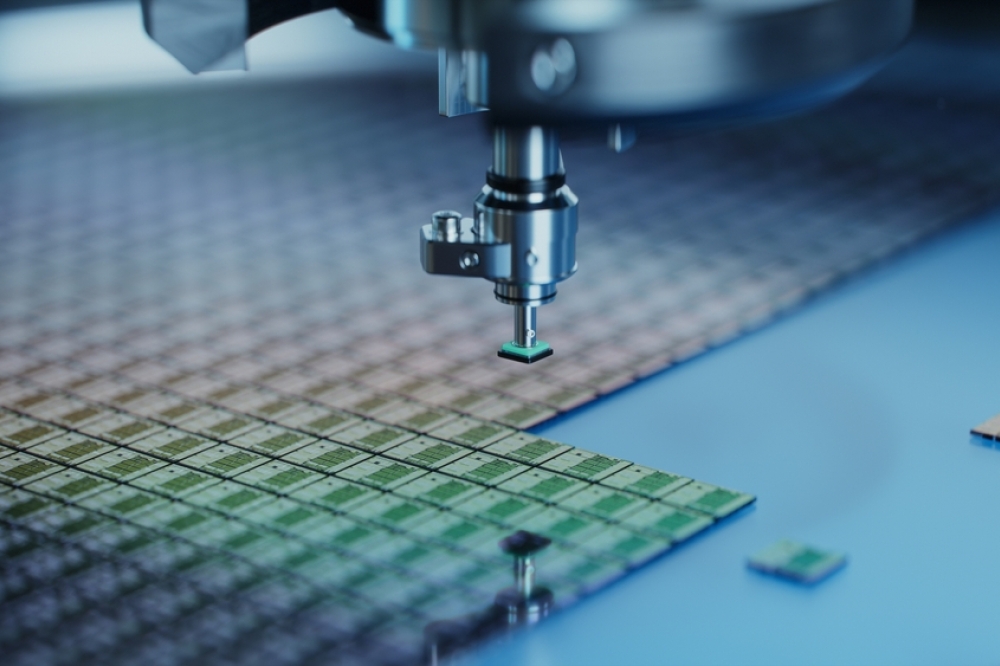

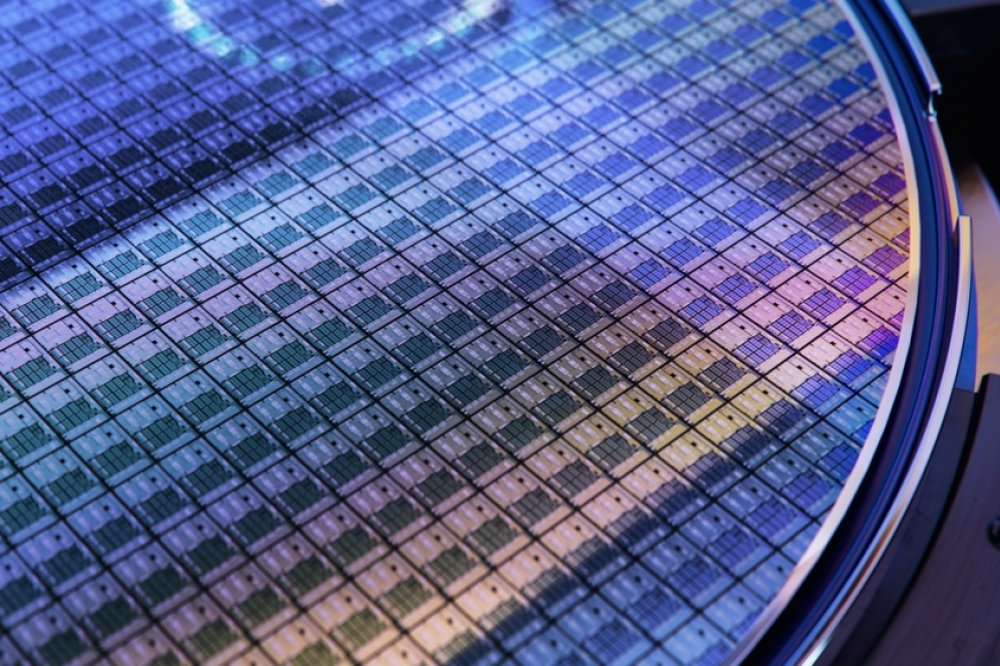

The industry is now moving to chiplet-based modules, using a “Lego-like” approach to integrate CPU, GPU, cache, I/O, high-bandwidth memory (HBM), and other functions. In the new world of chiplets1 and HI, the DUT is a complex multichip system with the integration of many devices in a single 2.5D or 3D package. Consequently, the tester can only access a subset of individual device pins. Nevertheless, at each test insertion, the tester must be able to extract valuable data that is used to optimize the current test insertion as well as other design, manufacturing, and test steps. With limited pin access, the tester must infer what is happening on unobservable nodes. To best achieve this goal, it is imperative to extract the most value possible out of the data that can be directly collected across all manufacturing and test steps, including data from on-chip sensors. The test flow in the chiplet world already includes PSV, wafer acceptance test (WAT), wafer sort (WS), final test (FT), burn-in, and SLT, and additional test insertions to account for the increased complexity of a package with multiple chiplets are not feasible from a cost perspective. Adding to the challenge, binning goes from performance-based to application-based. In this world, the tester must stay ahead of the system – the tester must be smarter than the complex system-under-test.

One example of application-based real-time binning is smart pairing, also called die matching. Smart pairing considers factors such as voltage, speed, and power consumption to ensure that all dies destined for a particular package have compatible electrical and thermal characteristics, minimizing differences related to factors such as reliability, signal delays, and power imbalances. As another example, a multi-core device might be designed for an application that requires the cores be closely matched. A subsequent application might arise that requires fewer cores or that can tolerate more relaxed matching requirements. Real-time binning can select the highest performance, highest quality devices for the first application while reserving other devices for the second less stringent application.

The rules-based decision models that have worked well in the pre-chiplet world will continue to serve certain purposes. But for chiplet-era production the ML model is the essential path forward to meet the complex real-time decision-making requirements.

Detection of subtle failure mechanisms in AI devices requires a wide range of input data. In addition, with limited test access in the final package, on-chip sensor data, equipment settings and sensor data, and upstream data from design and manufacturing will all become increasingly important in identifying defects. ML can leverage all this data to fully enable powerful predictive and adaptive test approaches.

In general, the goal is to enable the customer to “shift left” in terms of debug and disposition – catching defects at WS, for example, before assembling the wafers into packaged devices.

Packaging cost and complexity are aggressively increasing in the chiplet/HI world, so it is critical that only known-good die (KGD) make it into a package. An ML model running on a WS system can make real-time decisions about KGD based on data from multiple insertions. Prior research has shown that applying a gaussian process-based outlier detection model at WAT can prevent many test escapes with minimal overkill (good dies incorrectly marked as defective), with FT yield increases resulting in significant cost savings.2

Keep in mind, however, that there are exceptions to the shift-left rule. For example, it may be beneficial to shift a lengthy test from FT to the right onto an SLT system, where test time is less costly. In either case, an ML strategy can help optimize test flow.

Applying AI and ML in a secure environment

Throughout the test process, the key is to leverage AI and ML to test the AI devices) – to analyze test data, to correlate data across multiple wafers, and to track exactly how each die made its way through the assembly process. A vast array of advanced tools and algorithms applied to design, manufacturing, test, and equipment data will provide correlation across the full manufacturing flow to provide insights that will enable flow optimization.

Increasing test requirements will drive the need for AI- and ML-driven predictive methods, with real-time execution of test code enabling automated corrective actions during production to control costs. One test customer has noted that ML-based parametric prediction can reduce parametric test time by 50% or more while successfully identifying performance-matched devices with no impact on quality.3

Aggressive adoption of modern data security technologies will be required to protect sensitive IP in outsourced manufacturing environments. The semiconductor supply chain often includes contract assembly and test services, and test equipment in one facility may process parts from multiple IC providers – presenting challenges for providers who want to secure all aspects of sensitive test IP. A facility can be expected to provide perimeter security, but with complex supply chains it may become difficult to define the perimeter.

One approach is the zero-trust security model. “Zero trust” implies that products and services by default must not trust other products or services. A zero-trust inference environment used in conjunction with an ATE system protects against threats both inside and outside of the traditional network boundaries, reducing the “zone of trust” from the network level to individual nodes or applications. Interactions between zones of trust require continual authentication.

An effective edge solution should support the full range of input data streams available in semiconductor manufacturing.

Running AI/ML workloads

A key consideration moving forward involves how to run AI and ML workloads in a test environment. The ATE host controller is not a good candidate. It is busy with standard ATE control tasks such as managing HW resources, running scan tests, and logging data. In addition, any changes to the host controller OS or SW configuration trigger costly recorrelation exercises, and hardware-refresh opportunities are limited. It is unlikely that a host controller could be upgraded with an ML-optimized processor, and even were that possible, running ML and sharing the resulting data with OSATs and other third parties could make sensitive test IP vulnerable.

Edge computing provides an effective and efficient way of performing real-time, ML-based decision-making in a test environment. Edge computing offers several benefits for applications in general: it provides low and predictable latency, improves bandwidth efficiency, is scalable, and enables enhanced security and privacy. In the test environment, it can handle chores spanning multiple time domains, providing processing in the milliseconds required for a single touchdown at wafer probe; in near real time, after five or 10 touchdowns, for example; and periodically, after a certain number of wafers or devices have been tested. Finally, at lot end, it can transmit the relevant data to the cloud for post processing and offline analytics.

An effective edge solution applies analytic models to enable real-time dynamically adaptive test flows.4 The goal is to minimize COT, to maximize yield, and—when needed – perform dynamic failure analysis to find root-cause correlations with other test insertions to facilitate continuous process improvements.

The solution should be compatible with a test vendor’s entire lineup of hardware and software platforms, and it should also have an open architecture to support customer-owned analytics models. It should be reliable, secure, and easy to update. In addition, it should be able to derive insights by integrating all data sources across the entire manufacturing supply chain, including the IDM, foundry, or fabless semiconductor company.

The adoption of edge compute for ML test applications is well underway. Advantest’s recently launched ACS Real-Time Data Infrastructure (ACS RTDI™) platform accelerates data analytics and AI/ML decision-making within a single integrated platform. It collects, analyzes, stores, and monitors semiconductor test data as well as data sources across the IC manufacturing supply chain while employing low-latency edge computing and analytics in a secure zero-trust environment. ACS RTDI minimizes the need for human intervention, streamlining overall data utilization across multiple insertions to boost quality, yield, and operational efficiencies. It includes Advantest’s ACS Edge™ HPC server, which works in conjunction with its V93000 and other ATE systems to handle computationally intensive workloads adjacent to the tester’s host controller.

In this configuration, the ACS Edge provides low, consistent, and predictable latency compared with a datacenter-hosted alternative. It supports a user execution environment independent of the tester host controller to ease development and deployment. It also provides a reliable and secure real-time data infrastructure that integrates all data sources across the entire IC manufacturing supply chain, applying analytics models that enable real-time decision-making during production test.

For its part, the V93000 brings flexibility and performance to the test of HI and other devices. Its wide range of available instruments make it suitable for the test of a variety of AI, ML, HI, and other chiplet-based devices across a range of test insertions. With instrument cards such as the PS5000, XPS256, and Link Scale, the V93000 enables customers to shift left—for example, using Link Scale to run mission-mode-like tests at WS.5

COT reduction through ML

Initial work by Advantest and our customers has involved COT reduction through the use of ML to identify time-consuming portions of the test flow that can be shortened or eliminated through correlation with previous insertion data. Work to date confirms that adopting ML at the edge provides significant ROI. Additional work over the next one to three years will connect more data across the value chain to develop advanced models that can identify more complex correlations and accurately predict yield, quality, and reliability. Yet another initiative, achievable within five years, is bidirectional optimization across the semiconductor value chain with feed-forward and feed-backward data flow to optimize front-end and back-end processes, with edge-compute resources enabling real-time decision-making and optimization.

Probe cleaning and yield enhancement

In addition to ACS Edge and the V93000, Advantest also offers products that leverage AI and ML to enhance the test of AI-enabling devices. For example, Advantest ACS Adaptive Probe Cleaning (ACS APC) applies AI/ML to schedule probe needle cleaning on an as needed basis. In addition, ACS Engineering AI Studio for Yield Improvement (ACS EASY) is an AI-powered, user-friendly software solution that automates the analysis of yield issues, reducing engineers’ workloads and speeding correction turnaround time.

Conclusion

Chiplets and HI in 2.5D/3D packages are bringing new challenges in achieving yield and reliability targets at acceptable COT levels for the emerging class of AI processors and HPC devices. With rules-based point solutions inadequate for the task, AI and ML techniques have emerged to process test data as well as data from the entire semiconductor value chain to ensure success. Edge computing is a key driver for processing data from chip sensor IP, equipment sensors, parametric test results, and upstream data sources to fully analyze and appropriately react to results. Advantest’s customers have achieved multiple successes by embracing ML and edge computing to address multifaceted challenges with a comprehensive and cohesive set of solutions.

Additional progress will require an “all in” mentality across the semiconductor supply chain to leverage data sharing to enable even more complex models and accelerate the financial benefits that will accrue to all industry participants.