How silicon became the hidden backbone of AI

AI’s next phase will be shaped as much by power delivery and materials as by model capability. The core question isn’t just how fast models can advance, but whether the infrastructure beneath them can keep pace.

By René Jonker, Chief Product Officer, Soitec for Silicon Semiconductor

AI is becoming a power and electricity problem. The industry has been dealing with the power side of scaling for years, but demand is now outpacing the assumptions that data center infrastructure was built on.

While headlines focus on capacity growth, frontier models, and agentic AI, the more pressing issue lies lower in the stack at the silicon and materials layer. Increasingly, engineered substrates are emerging as the hidden backbone, shaping whether AI can continue scaling quickly without making energy use and reliability unsustainable.

Data centers are turning to silicon carbide for efficiency

According to the International Energy Agency, in 2024 data centers consumed 415 tera-watt hours (TWh), representing about 1.5% of the global electricity generation. In 2025 this figure approached 500 TWh, and it is projected to more than double by 2030 as AI workloads expand.

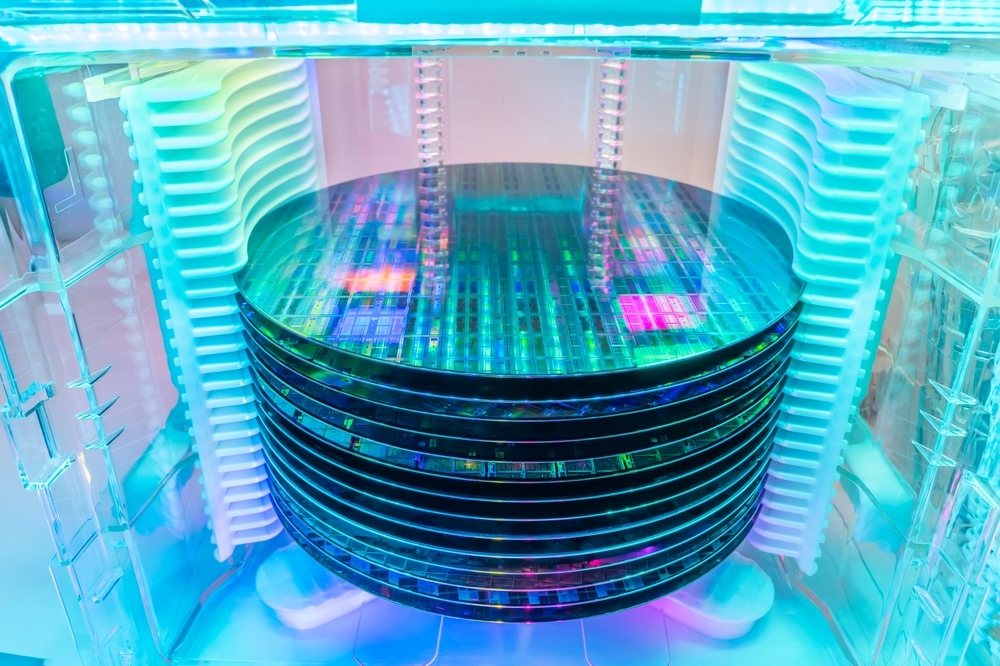

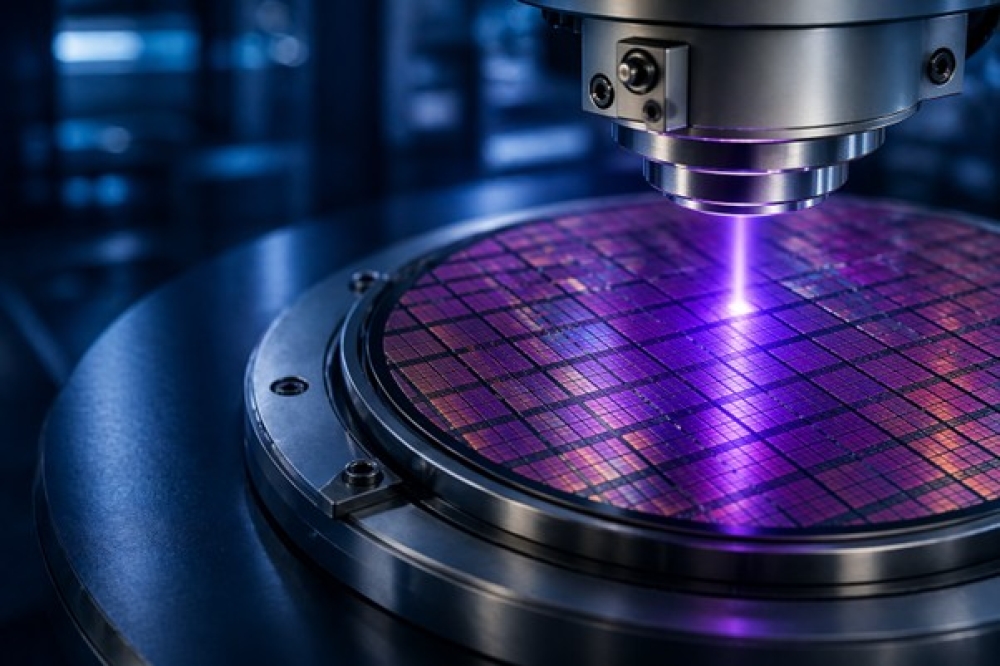

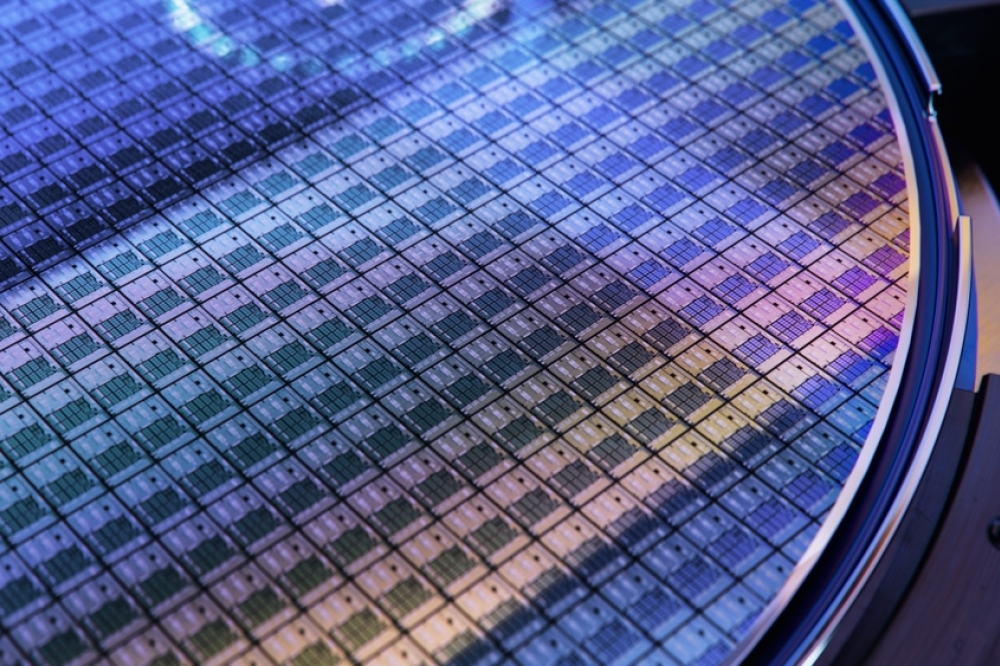

At scale, the challenge shifts from compute availability to power efficiency: how effectively electricity moves from the grid down to servers, accelerators, and out through cooling systems. Silicon carbide (SiC) power devices are becoming more common because they offer the best power efficiency for the new 800V data center power distribution topologies, dissipate better energy losses, and present a proven high reliability, which is crucial for facilities that run continuously.

Engineered substrates can further improve SiC devices’ performance. SmartSiC™, Soitec’s SiC engineered substrates, are composed of a thin, high-quality SiC single-crystal layer on top of an ultra low-resistivity handle wafer. These substrates have demonstrated to improve device robustness and reliability while lowering conduction and switching losses. For AI data centers, these substrate-level gains translate into reduced heating across the power conversion stages, an increasingly critical factor as power density rises and data centers scale up.

How AI is shifting infrastructure priorities

AI models are advancing quickly, creating a greater spotlight on the infrastructure required to grow efficiently. Under sustained & high-intensity workloads, power delivery, thermal management and reliability of the complete system are fundamental for a cost effective service.

That reality reinforces the role of engineered substrates. They directly affect power efficiency, heat management, and behavior under sustained load. GPUs remain central, but they cannot solve system-level efficiency constraints alone. As power and energy constraints tighten, engineered substrate choices become one of the most effective levers for scaling AI infrastructure without breaking down.

Why AI power is driving deeper US-Europe collaboration

AI infrastructure spans materials, devices, manufacturing and deployment, and today, no single region dominates all four of these areas. As AI energy demands rise at unprecedented speeds, this raises the stakes for global supply chain reliability, scale and resilience, welcoming cross-regional collaboration.

Europe has a clear leadership role in advanced semiconductor materials with deep expertise in engineered substrates and power materials. The United States, by contrast, remains competitive in device design, manufacturing scale and AI deployment. Together, these two regions cover far more of what AI power systems actually require.

In the past year, European materials suppliers and United States device manufacturers partnered to validate how materials improvements translate into device-level efficiency gains. Work like this strengthens the foundation for AI power infrastructure, and we’ll continue to see regions partner together to close gaps across the semiconductor supply chain as AI scales.

What comes next for AI infrastructure

AI’s next phase will be shaped as much by power delivery and materials as by model capability. The core question isn’t just how fast models can advance, but whether the infrastructure beneath them can keep pace. Increasingly, the answer will be shaped by silicon, materials, and the global collaborations needed to build AI power systems that can scale, reliably, and sustainably.